2023

Puzzle Sandbox

The project was completed as part of my Master’s degree at Goldsmith University. It is designed to immerse players in a shared experience where they work together to solve puzzles. Players solve puzzles and bring back items to update the main hub.

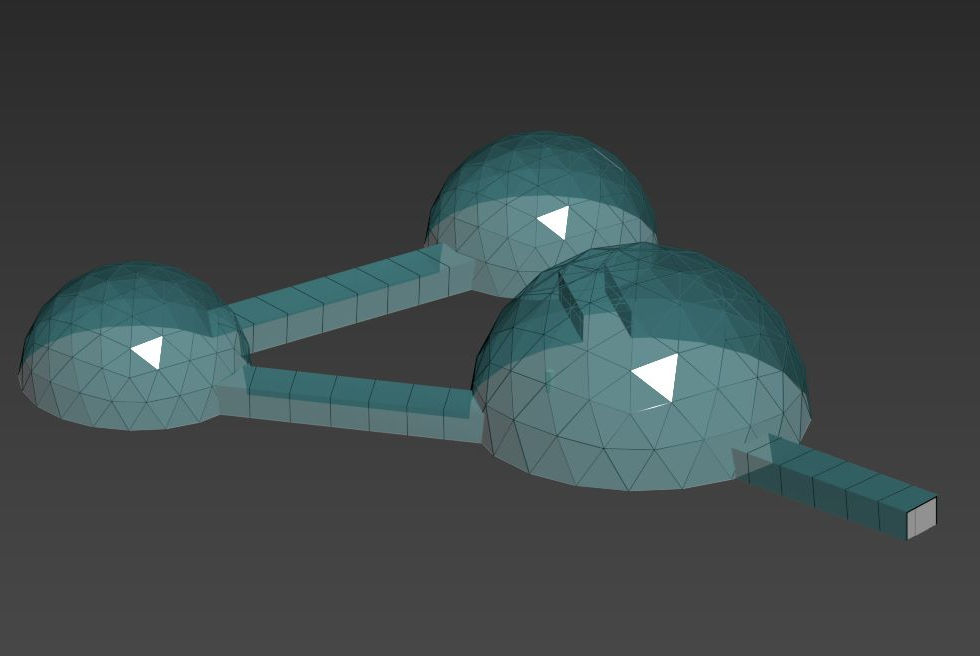

Puzzle Sandbox is a program we created for users who prefer to hang out with friends online versus in VR rooms that are open to anyone. The project allows players to walk around and chat from anywhere in the main scene. The team added puzzle elements to the project to allow users to have something to do in the world they were in, and in doing so they can update their main area. Users can join at any time but will not be able to jump into a puzzle that is already in progress. In extending the program in the future, the team wants to be able to add more puzzles, or swap them, making the program modular. This program currently has most core systems implemented, a tutorial puzzle to show how the puzzle system works, and a puzzle that is almost finished to show how it can be modular.

Contributions:

Project Manager:

For this project I took charge of working with the team to put tasks down into a GitHub Project and to keep people on task so we could focus on getting the project to completion. I worked with the team to come up with a timeline that we thought would work for the project and checked in with them each week to see where we were and adjust as needed (using agile practices). This time around we worked specifically on the sprint model where we decided what we wanted to do during the sprint and didn't concern ourselves as much with the overall goal. We had it in mind but wanted to focus on what we could get done in the two-week sprints. This worked better for us as we all were divided amongst other projects and needed to keep things focused on the short-term goals.

Lead Developer:

I worked with the team to get the project set up and built the basic game management system so we could swap scenes in and out for each puzzle. I put together the puzzle choice system and added buttons to push that had a tactile feel by using small controller vibrations and sounds to immerse the player. These buttons were set up so the player could have control of entering the multi-player room and teleporting to the puzzles.

I also worked on the in-game UI code for the user. I created the start game screen, the teleport menu, and the wrist menu, using Juhi's assets. Both the start screen and teleport menu are world space menus that turn on and off when events happen. The start game turns off when the user teleports into the room and the teleport menu appears with the teleport button after the tutorial puzzle is solved (all users need to find a red cube and put it into the table). The personal UI appears on the wrist when the user presses the left controller's menu button and turns on a laser pointer so they can manipulate that UI or others.

I also put together a physics-based movement system that seems to work better than the built-in character controller. I found a great tutorial online, by Valem Tutorials, that explained how to do the system and implemented it with a few changes for our multi-player experience. The idea is to eventually be able to climb walls like the Boneworks game. It will allow for more interesting puzzles in the future.

I worked on adding Meta avatars to the project. I was able to successfully get an avatar into the world using Meta’s Avatar SDK. Unfortunately, I was unable to get it working with a standalone build of the project. I also updated our entire project to the URP pipeline as it was necessary for the shaders used by the Oculus Avatar SDK.

Scripts I created:

· PhysicsRig.cs – implemented using the Valem Tutorials – Full Body Physics in VR tutorial

· XRButton.cs – updated from Create with VR’s script

Sample walkthrough of the application

Contributions Timeline

User experience flow